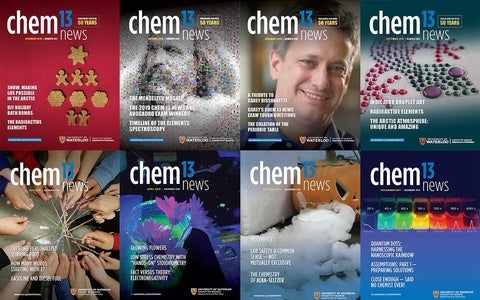

From 1968 to 2022 - more than 50 years! - the Department of Chemistry at the University of Waterloo published an informal magazine for teachers of introductory chemistry courses at the high school and year one college levels.

Our archives present the top 100 most accessed articles since 2012.

Browse by category

Activities

Series

- IYPT Timeline of Elements project

- Models and Analogies

- AP Chemistry Thought Lab

- Chem 13 News | Tough Questions series

- Other Chemistry contest questions and answers

- Sharing Chemistry with the Community

Special issues

- November 2019 Special Issue: Tribute to Carey Bissonnette

- Fall 2022 Special issue: Chemistry and Inuit Life and Culture

Other

Browse by lesson

Download complete sections from our archives

Trying to find an article not within the top 100? Download our archives and view on your browser offline! To access the files, unzip the download. Within the folder, you can open the html file directly or browse by the accompanying menu "FULL_MAP.html."

- Atomic theory and bonding.zip

- Electrochemistry.zip

- Energy changes and rates of reaction.zip

- First day review, games and cartoons.zip

- Gases.zip

- Matter: chemical and physical properties.zip

- Organic chemistry.zip

- Periodic table and trends.zip

- Quantities: moles, stoichiometry, and significant digits.zip

- Reactions.zip

- Safety.zip

- Sharing chemistry with the community series.zip

- Systems: equilibrium, acids, and bases.zip