BCI-Controlled Wheelchair

Researchers: Dalya Al-Mfarej, Junsong Li, and Taha Liaqat

Current assistive mobility solutions for individuals with ALS and similar neurodegenerative conditions remain limited in their ability to provide intuitive, non-invasive control of powered wheelchairs. This research aims to address this gap by developing and evaluating a mobile brain-computer interface (BCI) for wheelchair control that combines steady-state visually evoked potential (SSVEP) signals with eye tracking technology. By utilizing dry electrodes for rapid setup and augmented reality (AR) for stimulus presentation, the system is designed to be practical and accessible outside of laboratory settings. Two control schemes, BCI and a hybrid BCI-eye tracking controller, were tested on a LUCI-equipped powered wheelchair with integrated obstacle avoidance capabilities, enabling a comprehensive comparison of control approaches. As BCI and AR technologies continue to advance rapidly, it is critical that their integration into real-world assistive devices is rigorously evaluated to ensure both safety and usability for individuals with severe mobility impairments.

Physiological Readiness for Therapy System (PRÊTS)

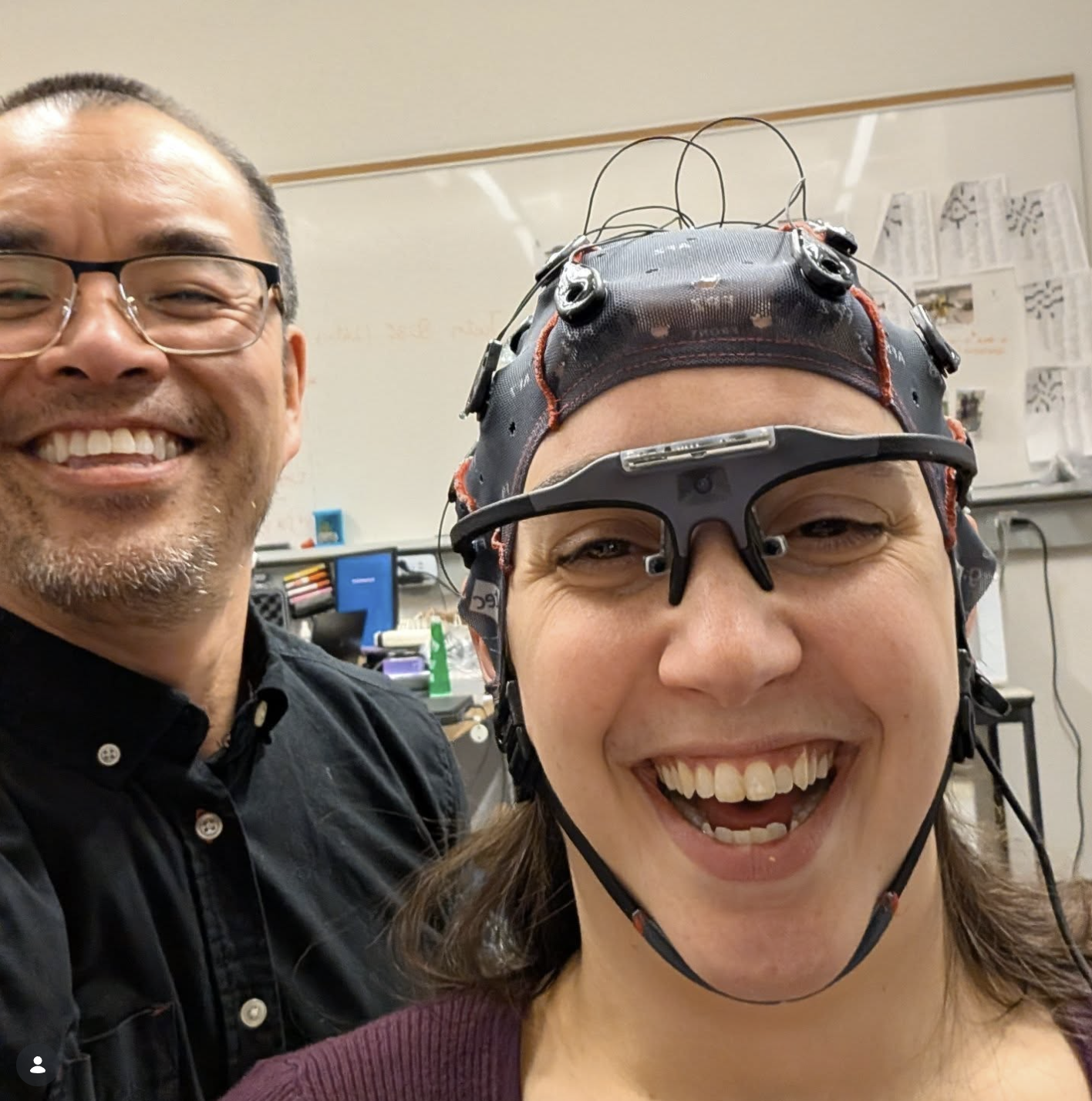

Researchers: Naomi Thomas, Dalya Al-Mfarej, Justin Zhou, and Alyson Colpitts

The Challenge Point Framework proposes that optimal learning occurs when task difficulty is adapted to current skill level. Currently, subjective measures are used, but with increasing need for rehabilitation, it is critical to optimize the motor learning process. Physiological signals such as EEG can be used to model cognitive and physical workloads to understand how well patient's can match different task difficulty. The aim of this study is to advance to rehabilitation process by investigating EEG and other physiological patterns corresponding to cognitive and physical effort, to gauge how and if task difficulty should be adapted.

Spatial Orientation of Neural Auditory Responses (SONAR)

Researchers: Naomi Thomas

Hearing aids and cochlear implants struggle in noisy, multi-speaker environments, as they cannot tell who the user is actually trying to listen to. This project tackles that gap by using EEG brain signals to decode the direction of a listener's auditory attention in real time. By identifying the neural markers of spatial attention, the goal is to build a wearable, adaptive system that can steer assistive hearing devices toward the speaker the user intends to hear.