🏆 Distinguished Paper Award — FORGE 2026

Overview

When a modern cloud service fails, engineers must trace a fault through a tangled web of microservices, databases, and hosts to find its origin—a process called root cause analysis (RCA). Multi-hop faults are especially treacherous: the visible symptoms may appear several service boundaries away from the true source. Large Language Models (LLMs), with their ability to reason over heterogeneous data and call external tools, are a natural candidate for automating this process.

But how well do they actually reason? Most prior work embeds LLMs inside elaborate multi-agent pipelines, making it impossible to tell whether a failure stems from the model's own reasoning or from scaffolding around it. This project addresses that gap directly.

We designed a controlled evaluation framework that strips away confounding factors—simplified agent architectures, deterministic tools, structured knowledge graphs—to expose the LLM's reasoning in isolation. We evaluated six open-source LLMs across three agentic workflows and two real-world microservice datasets, executing 48,000 fault scenarios (totaling 228 days of non-parallelized compute time). Beyond measuring accuracy, we developed a labeled taxonomy of 16 reasoning failure types and used an LLM-as-a-Judge evaluator to annotate over 3,000 inference traces. The result is the first empirical investigation into the isolated reasoning capabilities—and characteristic failure modes—of LLM-based RCA agents.

Contributions

This study makes four concrete contributions:

- Evaluation framework. A controlled environment that isolates LLM reasoning from confounding factors, using simple agent architectures, deterministic tools, and explicit typed knowledge graphs for system context.

- Empirical study at scale. 48,000 fault scenarios and 228 days of execution time to assess the capabilities of six LLMs under several agentic and one non-agentic workflows.

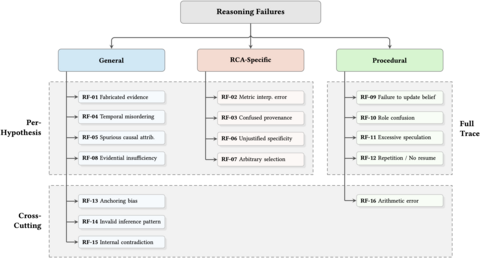

- Reasoning failure taxonomy. A labelled taxonomy of 16 common RCA reasoning failures, organized into general, RCA-specific, and procedural categories.

- LLM-as-a-Judge evaluator. A reasoning quality evaluator guided by a reasoning failure taxonomy validated against human annotations (Cohen's κ = 0.92), enabling large-scale automated assessment of RCA reasoning traces.

Approach

Our framework is organized into three stages: data preparation, in which we distill multi-modal telemetry (logs, metrics, traces) into unified alerts and construct a typed system knowledge graph; RCA inference, where an LLM agent reasons under one of three workflows (Straight-Shot, ReAct, or Plan-and-Execute) to produce ranked fault hypotheses; and evaluation, which assesses both final output accuracy against ground-truth fault labels and the quality of intermediate reasoning traces using the LLM-as-a-Judge.

We evaluated six open-source models—Llama 3.2 (3B), Qwen 3 (4B and 32B), Llama 3.3 (70B), Command R+ (104B), and DeepSeek-R1 (70B)—on two real-world microservice benchmarks (GAIA and OpenRCA).

End-to-end overview of the study methodology, including data preparation, RCA inference, and output quality and reasoning quality evaluation.

Taxonomy of Reasoning Failures

Through iterative open coding and paired LLM–human annotation, we identified 16 recurring reasoning failure types. These are organized into three categories reflecting the nature and scope of each failure.

General reasoning failures undermine the credibility of individual hypotheses or entire traces. Fabricated evidence (RF-01) and evidential insufficiency (RF-08) are the most prevalent failures in the dataset overall. Temporal misordering (RF-04), spurious causal attribution (RF-05), anchoring bias (RF-13), logical fallacies (RF-14), and internal contradictions (RF-15) round out this category. The high prevalence of these failures means that hypothesis claims frequently cannot be trusted at face value; effective RCA systems must apply evidence-sufficiency checks and KG-consistency filters before ranking or acting on hypotheses.

RCA-specific failures reflect insufficient domain knowledge. They include misreading metric directionality (RF-02), confusing symptom observers with root-cause sources (RF-03), unjustified instance-level claims (RF-06), and arbitrary evidence selection inconsistent with standard triage heuristics (RF-07). These failures appear consistently across models and workflows, suggesting they stem from a lack of domain specification rather than workflow sensitivity. Explicit triage guidance and fine-tuning on diagnostic reasoning patterns are likely necessary to address them.

Procedural failures arise in the agent's decision-to-act loop. They include failure to update beliefs after contradicting evidence (RF-09), treating simulated tool outputs as factual (RF-10), excessive speculative reasoning that blocks analysis (RF-11), and repetitive stalling without substantive progress (RF-12). These failures are most pronounced in smaller models under agentic workflows, and their prevalence rises sharply with workflow complexity for models that cannot sustain multi-step planning.

Key Insights

RQ1: How effectively can LLM agents perform RCA?

The RCA task proves to be challenging for competitive open-source LLMs, with sub-guessing performance arising as workflow complexity scales, particularly for smaller models. Although agentic workflows can improve propagation path accuracy—suggesting better grounding in the system knowledge graph—it is highly model-specific whether LLMs can adequately exploit agentic workflows. The utility of smaller models in more complex workflows like Plan-and-Execute is critically constrained by high recursion-limit failures, reflecting a capacity mismatch: the multi-step planning these workflows demand exceeds what smaller models can sustain.

RQ2: How sensitive are final RCA outcomes to alert modalities?

Excluding metrics or logs markedly reduces accuracy, indicating that these modalities are most effectively leveraged by LLMs—metrics for fault localization and logs for interpreting fault type. Conversely, excluding traces often improves accuracy, suggesting that current models struggle to integrate raw trace alerts and that their inclusion can distract reasoning. These results identify which modalities LLMs can reason with effectively and which require better integration strategies.

RQ3: What reasoning failures appear in agent inference traces?

Reasoning failures are pervasive across models and workflows, grouping into procedural, RCA-specific, and general failure categories. Prevalence varies by model and workflow (notably, procedural failures are elevated in Llama 3.2 and Qwen 3 variants under agentic control) and general per-hypothesis failures have the highest average prevalence overall. RCA-specific reasoning remains insufficient, and general failures continue to dominate outputs.

RQ4: How do reasoning failures affect correctness?

The presence of reasoning failures—particularly anchoring bias, repetition and stalled progress, arbitrary evidence selection, and failure to update beliefs—is associated with substantially reduced RCA correctness. Agentic workflows are often associated with smaller negative risk differences, but do not eliminate them. RF co-occurrence and early failure compounding remain plausible contributors to the accuracy degradations observed in RQ1.

Hierarchical taxonomy of the 16 reasoning failures identified in LLM RCA inference traces. Columns partition failure categories (general, RCA-specific, procedural); horizontal swimlanes indicate scope (per-hypothesis, full-trace, or cross-cutting).

Conclusion

Current open-source LLMs face substantial barriers to reliable cloud RCA. Accuracy remains low across models and workflows, reasoning failures are pervasive, and adding agentic complexity often compounds errors rather than resolving them. Progress will require improving specific reasoning capabilities—not simply adding more agentic structure.

The results point to concrete directions: early hypothesis diversification, self-consistency and evidence-sufficiency checks, explicit domain guidance for triage and causal reasoning, and better alert representation strategies, particularly for trace data. Understanding how reasoning failures co-occur and compound remains an important open direction, especially in multi-step and multi-agent settings where early missteps cascade.

More broadly, this work argues that transparency in reasoning quality is as important as accuracy in evaluating RCA agents. The failure taxonomy and LLM-as-a-Judge evaluator developed here are reusable components for diagnosing and improving LLM reasoning in complex system diagnosis—and, by extension, in other reasoning-intensive agentic tasks.

Resources & Artifacts

Citation

Evelien Riddell, James Riddell, Gengyi Sun, Michał Antkiewicz, and Krzysztof Czarnecki. 2026. Stalled, Biased, and Confused: Uncovering Reasoning Failures in LLMs for Cloud-Based Root Cause Analysis. In 2026 IEEE/ACM Third International Conference on AI Foundation Models and Software Engineering (FORGE '26), April 12–13, 2026, Rio de Janeiro, Brazil. ACM. https://doi.org/10.1145/3793655.3793732