We introduce the Precise Synthetic Image and LiDAR (PreSIL) dataset for autonomous vehicle perception. Grand Theft Auto V (GTA V), a commercial video game, has a large detailed world with realistic graphics, which provides a diverse data collection environment. Existing work creating synthetic data for autonomous driving with GTA V have not released their datasets and rely on an in-game raycasting function which represents people as cylinders and can fail to capture vehicles past 30 metres. Our work creates a precise LiDAR simulator within GTA V which collides with detailed models for all entities no matter the type or position. The PreSIL dataset consists of over 50,000 instances and includes high-definition images with full resolution depth information, semantic segmentation (images), point-wise segmentation (point clouds), ground point labels (point clouds), and detailed annotations for all vehicles and people. Collecting additional data with our framework is entirely automatic and requires no human annotation of any kind. We demonstrate the effectiveness of our dataset by showing an improvement of up to 5% average precision on the KITTI 3D Object Detection benchmark challenge when state-of-the-art 3D object detection networks are pre-trained with our data. Our dataset will be made publicly available upon publication.

Paper

The full paper can be accessed on arXiv.org.

Dataset Download

Please use the dataset browser to download dataset files.

Dataset Creation

All data generation code can be accessed from DeepGTAV-PreSIL GitHub repository.

Scripts for generating ground planes, splits, and visualizations can be found at PreSIL tools GitHub repository.

Data Sample

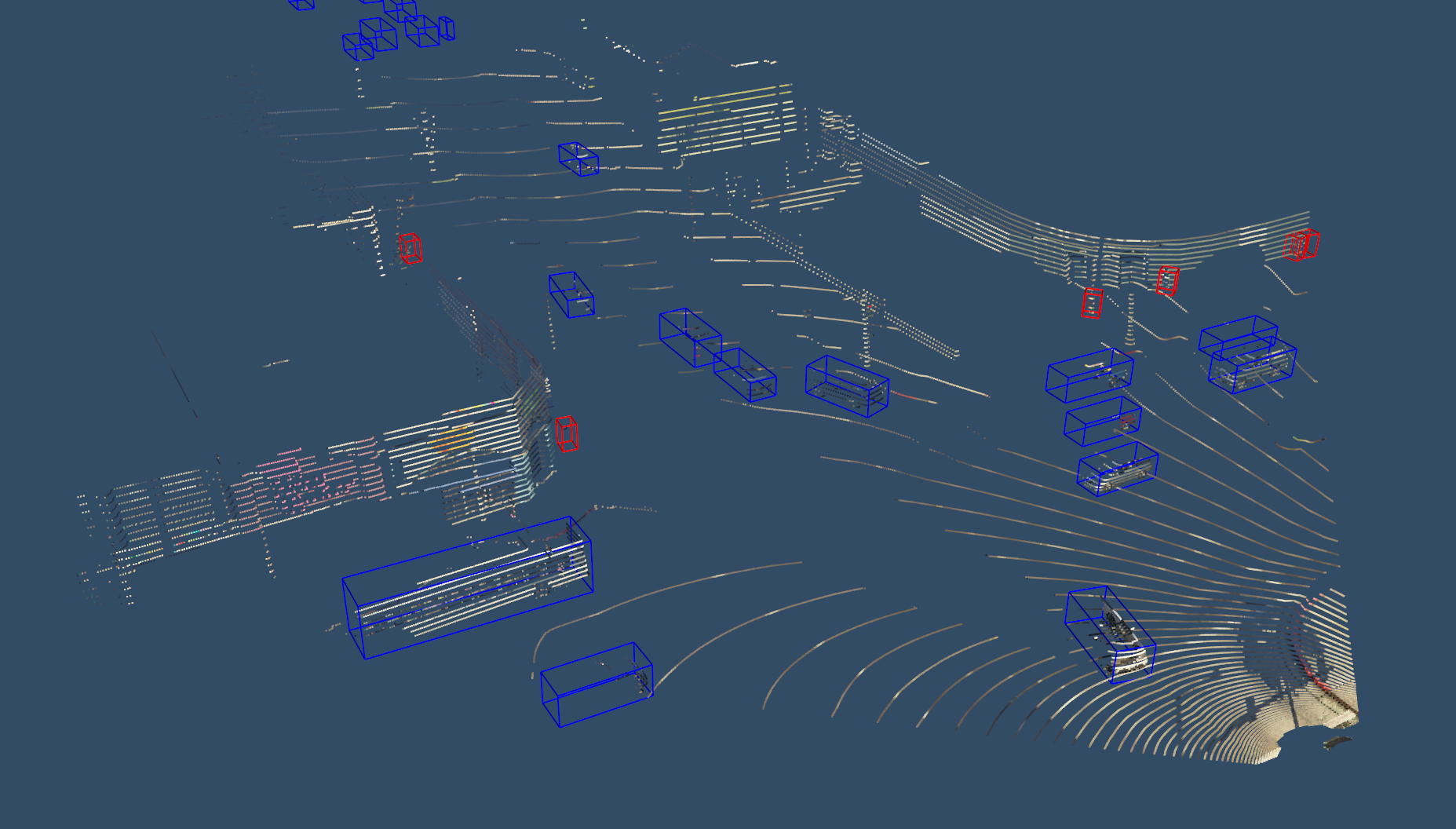

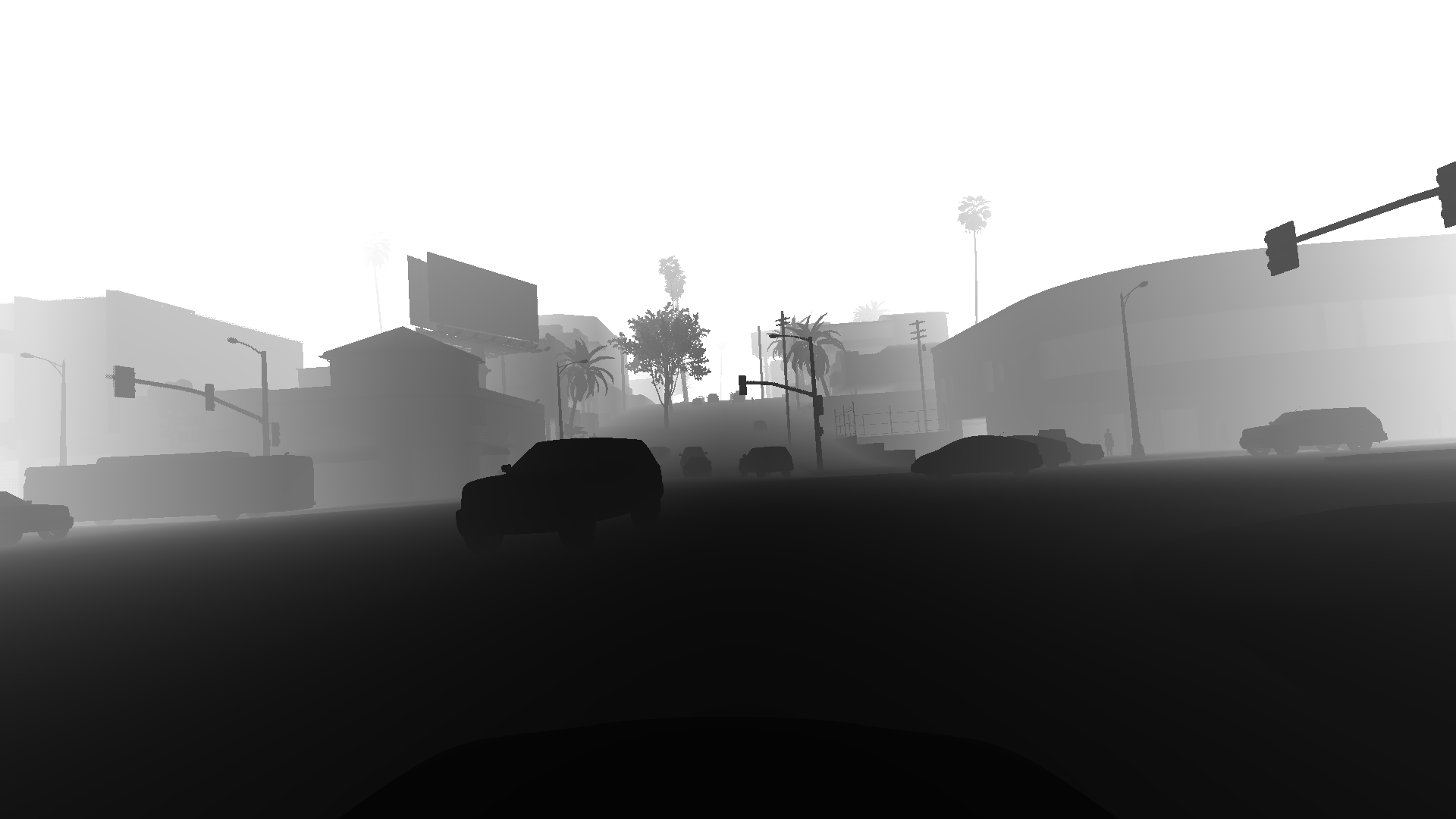

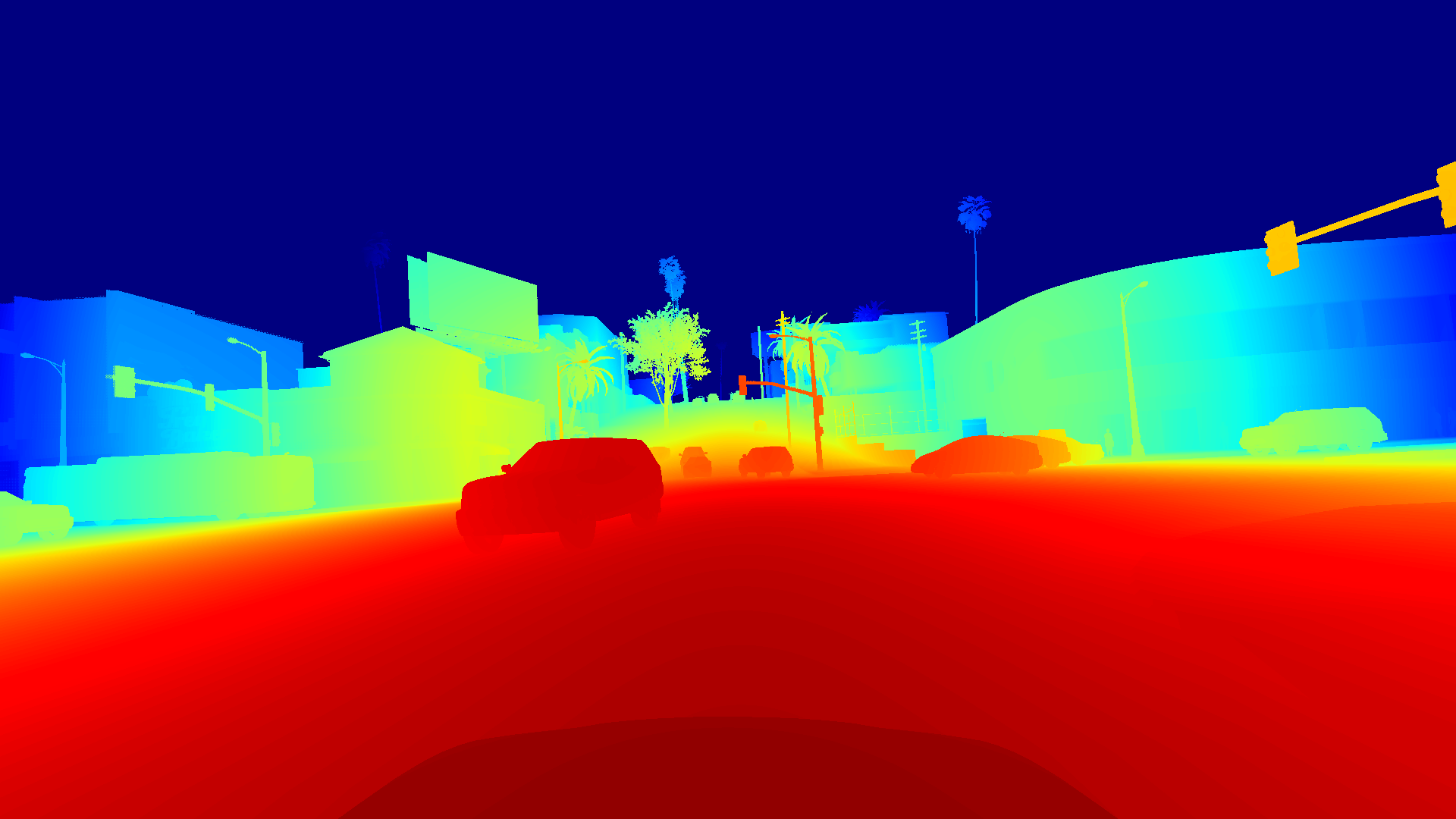

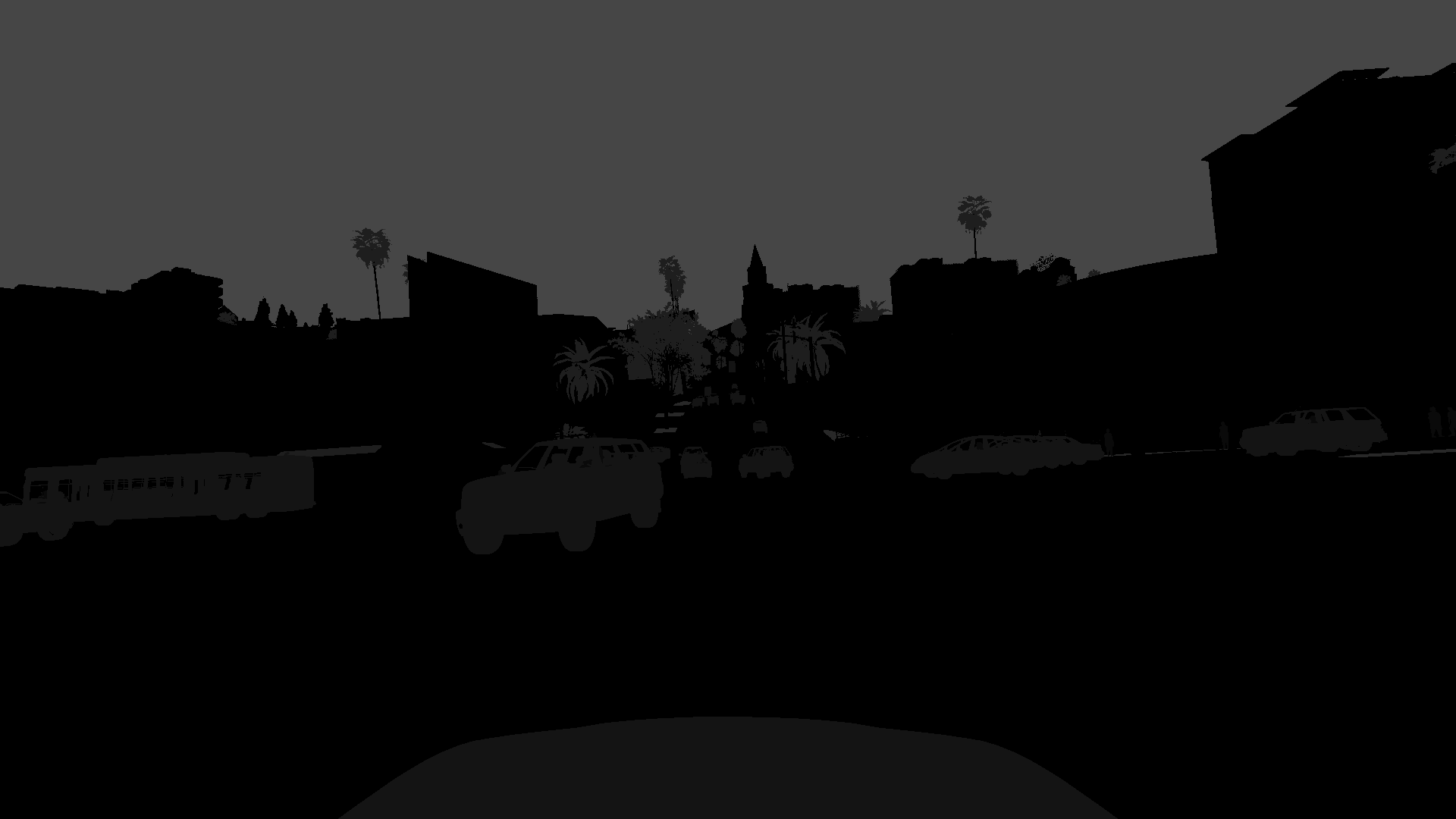

A sample image and point cloud collected using the PreSIL data collection method.

Image

Lidar point cloud

Linearized Depth Map (Grey and Colour)

Object Instance Segmentation

Stencil Buffer

This project is a collaboration with WAVE Lab.

We are looking for postdocs and graduate students interested in working on all aspects of autonomous driving.

For more information, visit Open positions.