Tackling fake news

Waterloo Engineering researchers are developing new technologies to combat disinformation — a robust solution to fake news

Waterloo Engineering researchers are developing new technologies to combat disinformation — a robust solution to fake news

By John Roe Faculty of EngineeringCutting-edge technologies gave the world fake news, but researchers from the University of Waterloo’s Faculty of Engineering are developing even newer technology to stop it. Their innovative system — the first of its kind — relies on something already famous for underpinning Bitcoin and other cryptocurrencies — blockchain. But in addition to sophisticated machines, these researchers are enlisting humans to establish the truth. Their goal is a world where people have greater trust in the news they see and hear.

The danger of disinformation — or fake news — to democracy is real. There is evidence fake news could have influenced how people voted in two important political events in 2016: Brexit, the exit of the United Kingdom from the European Union, and the U.S. presidential election that put Donald Trump in power. More recently the Canadian government has warned Canadians to be aware of a Russian campaign of disinformation surrounding that country’s war against Ukraine. Although big tech companies, including Facebook and Google, have established policies to prevent the spread of fake news on their platforms, they’ve had limited success.

A team of Waterloo researchers hope to do better. According to Chien-Chih Chen, one of the project’s lead researchers and a PhD candidate in electrical and computer engineering, the system he and his colleagues have developed over the past three years is “unique,” and consists of three main components.

It starts with the publication of a news article on a decentralized platform based on blockchain technology, which provides a transparent, immutable record of all transactions related to news articles. This makes it extremely difficult for users to manipulate or tamper with information.

Blockchains are best known for playing a similar role in making secure cryptocurrency transactions. Chen said they can also make his system for verifying news secure.

Second comes human intelligence in the form of a quorum of validators who are incentivized with rewards or penalties to assess whether the news story they’re reviewing is true or false.

The quorum would be a subset of the larger community of users on the platform. A quorum’s members could be chosen at random from people interested in validating news stories or from those with a proven reputation for authenticating news — or a combination of both groups. They’d verify news stories by reading an article and judging its veracity based on their own knowledge and sources. They would then state their opinion on whether or not the article is accurate.

Chien-Chih (Joseph) Chen, a Waterloo Engineering PhD candidate in electrical and computer engineering, is part of a research project developing new technologies to combat disinformation.

Of course, the big question for everyone concerned about the accuracy of a particular news story would be: How much could they trust the quorum of human validators? The system designed by Chen and his fellow researchers provides an answer. They created an entropy-based incentive mechanism.

The quorum’s collective opinion would be used to establish a consensus on the accuracy of the article. The article would then be validated — or flagged as fake news — based on the outcome of the consensus mechanism. “Validators who provide accurate information that aligns with the consensus of the majority would be rewarded while those who provide fake news or inaccurate information would be penalized,” Chen explained. Those rewards or penalties could be in the form of various cryptocurrencies, such as Bitcoin, Ether or XRP.

Ultimately, the article would be validated as trustworthy on the platform only if most of the validators’ opinions align with the truth. As for the user who published the article in the first place, that person might then receive a reward using the entropy-based incentive mechanism. But if the article is exposed as fake news, the user who published it could be penalized. Meanwhile, this entropy measure would convey to the end-user the degree of uncertainty in the output.

At this point the researchers have built, and are working with, an early prototype. While initial test results are promising, their system is still in the development stage and needs a significant effort to make it usable in the real world. Even so, an industrial partner, Ripple Labs Inc. — a leading provider of crypto solutions for businesses — is sponsoring the Waterloo research.

“We are confident our system has the potential to be applied in practical situations within the next few years,” Chen said. “We believe it can provide a robust solution to fake news. I hope my research can impact the world to make a positive difference.”

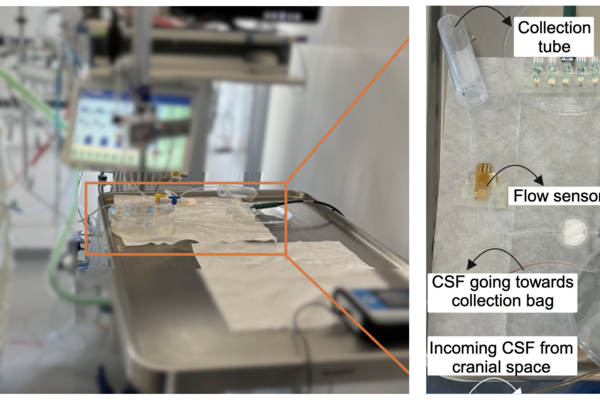

NeuroSense, a new bedside monitoring system designed to detect infections in brain-injured patients, being tested in an ICU setting (University Medicine Rostock).

Read more

New monitoring system tracks cerebrospinal fluid at the bedside for faster intervention

Read more

University of Waterloo researchers find arm slot and torso tilt play key roles in UCL strain

Read more

This year’s list spotlights nearly 100 innovators helping to strengthen Canadian sovereignty

The University of Waterloo acknowledges that much of our work takes place on the traditional territory of the Neutral, Anishinaabeg, and Haudenosaunee peoples. Our main campus is situated on the Haldimand Tract, the land granted to the Six Nations that includes six miles on each side of the Grand River. Our active work toward reconciliation takes place across our campuses through research, learning, teaching, and community building, and is co-ordinated within the Office of Indigenous Relations.