New software developed at the University of Waterloo could make it easier to adopt and trust powerful artificial intelligence (AI) systems that generate stock market predictions, assess who qualifies for mortgages and set insurance premiums.

The software is designed to analyze and explain decisions made by deep-learning AI algorithms, providing key insights needed to satisfy regulatory authorities and give analysts confidence in their recommendations.

“The potential impact, especially in regulatory settings, is massive,” said Devinder Kumar, lead researcher and a PhD candidate in systems design engineering at Waterloo. “If you can’t provide reasons for their decisions, you can’t use those state-of-the-art systems right now.”

Deep-learning AI algorithms essentially teach themselves by processing and detecting patterns in vast quantities of data. As a result, even their creators don’t know why they come to their conclusions.

To develop a program capable of explaining deep-learning AI decisions, researchers first created an algorithm to predict next-day movements on the S & P 500 stock index.

That system was trained with three years of historical data and programmed to make predictions based on market information from the previous 30 days.

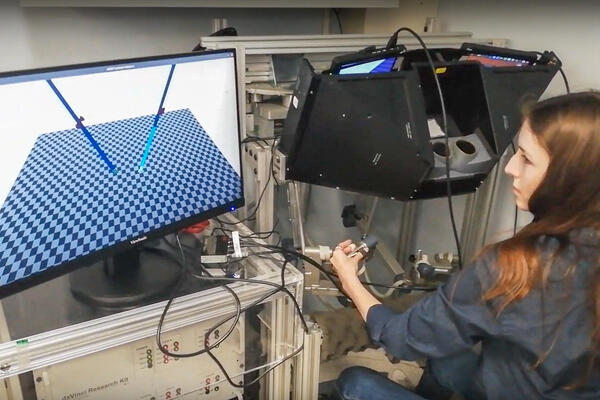

Explanatory software called CLEAR-Trade was then developed to examine those predictions and produce colour-coded graphs and charts highlighting the days and daily factors – index high, low, open and close levels, plus trading volume - most relied on by the AI system.

Those insights would enable analysts to use their experience and knowledge of recent world events to determine if deep-learning AI decisions actually make sense or not.

“If you’re investing millions of dollars, you can’t just blindly trust a machine when it says a stock will go up or down,” said Kumar, who expects to start field trials of the software within a year. “This will allow financial institutions to use the most powerful, state-of-the-art methods to make decisions.”

The ability to explain deep-learning AI decisions is expected to become increasingly important as the technology advances and regulators require financial institutions to provide reasons to the people affected by them.

While the stock market was used for development purposes, Kumar said the explanatory software is applicable to predictive deep-learning AI systems in all areas of finance.

Kumar, who collaborated with professors Alexander Wong of Waterloo and Graham Taylor of the University of Guelph, will present the research at the two-day Conference on Vision and Imaging Systems at the University of Waterloo at the end of October.