Amazon partners with Waterloo to support AI research

Students and researchers will explore new ways to apply artificial intelligence to the Internet of Things through Alexa Fund Fellowship

Students and researchers will explore new ways to apply artificial intelligence to the Internet of Things through Alexa Fund Fellowship

By Brian Caldwell Faculty of Engineering

Photo: cofoistome/iStock/Thinkstock

Recent advances in the fields of human-machine interaction and artificial intelligence (AI) have been so swift that even experts like Fakhri Karray shake their heads in amazement.

Just five or six years ago, the University of Waterloo engineering professor had trouble imagining computer software systems capable of both recognizing and “understanding” everyday speech with an extremely high degree of accuracy.

Now that technology is not only available to consumers, Karray is part of a program funded by Amazon to educate, encourage and support students as they explore new ways to apply it in the burgeoning Internet of Things (IoT).

“It’s evolving at incredible speed,” says Karray, an electrical and computer engineering professor, University Research Chair and director of the Centre for Pattern Analysis and Machine Intelligence.

At the core of the new program is Alexa, a cloud-based service that powers devices like Amazon Echo, Echo Dot, Amazon Tap and more.

Using natural speech recognition technology, Alexa processes queries and commands from users, then performs requested tasks such as playing music, providing weather reports, and activating devices and appliances.

As part of the Alexa Fund Fellowship, which was formally announced today, Amazon is supplying Alexa-enabled devices and sending an Alexa speech scientist to mentor Waterloo researchers and students.

There will be three undergraduate courses hosted by the Department of Systems Design Engineering for biomedical and mechatronics engineering students currently run by Professor Alexander Wong and Professor Igor Ivkovic, and a graduate-level course hosted by the Department of Electrical and Computer Engineering currently run by Karray.

Engineering students will be exposed to how Alexa can augment the human-computer interface experience in a natural manner.

Another key component of the program will be student projects that integrate Alexa with AI tools to enable users to operate machines and devices in an even more natural, human-like way.

“When you speak to a robot, you don’t want it to be robotic,” says Karray.

For example, instead of instructing Alexa to heat a room to a particular temperature, the goal in the future would be to simply ask if it is “warm enough” and have a smart home both understand and respond appropriately.

At least 10 class projects by fourth-year students and another half-dozen Capstone Design projects will be supervised by Chahid Ouali, a post-doctoral fellow hired to work under Karray. Ouali will also assist classroom instructors and liaise with Amazon.

The year-long program will culminate in a demonstration day for students to showcase their projects for peers, faculty and Alexa team members.

An early student research project involves the use of Alexa and voice commands to control the lights and temperature in a model, three-room house fitted with sensors. Karray hopes it will yield new technology to add capabilities to Alexa.

Such progress has been made possible, he says, by a convergence of factors including the development of powerful speech training and machine learning algorithms, the growth of cloud computing and vastly increased Internet connectivity.

“We are in the midst of a revolution in the field of operational artificial intelligence and this is one part of it,” Karray says.

He hopes to widen use of the technology beyond teaching and explore research ideas by employing Alexa as an accelerator.

Also participating in the program are Carnegie Mellon University, Johns Hopkins University and the University of Southern California.

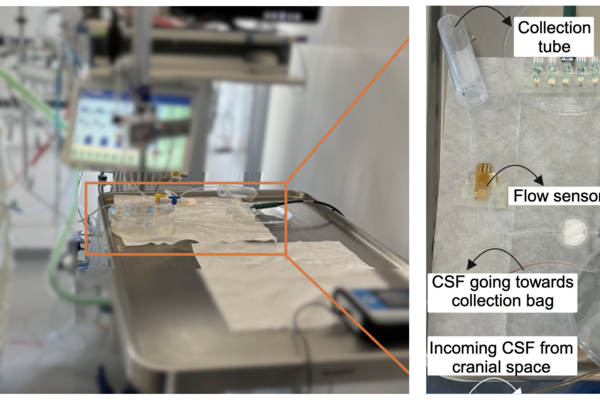

NeuroSense, a new bedside monitoring system designed to detect infections in brain-injured patients, being tested in an ICU setting (University Medicine Rostock).

Read more

New monitoring system tracks cerebrospinal fluid at the bedside for faster intervention

Read more

University of Waterloo researchers find arm slot and torso tilt play key roles in UCL strain

Read more

This year’s list spotlights nearly 100 innovators helping to strengthen Canadian sovereignty

Read

Engineering stories

Visit

Waterloo Engineering home

Contact

Waterloo Engineering

The University of Waterloo acknowledges that much of our work takes place on the traditional territory of the Neutral, Anishinaabeg, and Haudenosaunee peoples. Our main campus is situated on the Haldimand Tract, the land granted to the Six Nations that includes six miles on each side of the Grand River. Our active work toward reconciliation takes place across our campuses through research, learning, teaching, and community building, and is co-ordinated within the Office of Indigenous Relations.