Deep Neural Networks

, 2020. A review of machine learning applications in wildfire science and management. Environmental Reviews, 28(3), p.73. Available at: https://www.nrcresearchpress.com/doi/10.1139/er-2020-0019#.X1jbKtNKhTY. Publisher's Version

, 2020. Supervision and Source Domain Impact on Representation Learning: A Histopathology Case Study. In International Conference of the IEEE Engineering in Medicine and Biology Society (EMBC'20). 42nd Annual International Conference of the IEEE Engineering in Medicine and Biology Society (EMBC'20): IEEE Engineering in Medicine and Biology Society. Available at: https://embs.papercept.net/conferences/scripts/rtf/EMBC20_ContentListWeb_1.html#moat2-15_02. Conference Description

Course News (ECE 657A W20)

Compact Representation of a Multi-dimensional Combustion Manifold Using Deep Neural Networks,

at

European Conference on Machine Learning (ECML 2019), Wurzburg, Germany,

Thursday, September 19, 2019:

, 2019. Learning Multi-Agent Communication with Reinforcement Learning. In Conference on Reinforcement Learning and Decision Making (RLDM-19). Montreal, Canada., p. 4.

, 2019. Comparison of Deep Learning models for Determining Road Surface Condition from Roadside Camera Images and Weather Data. In The Transportation Association of Canada and Intelligent Transportation Systems Canada Joint Conference (TAC-ITS). Halifax, Canada, p. 16.

, 2019. Compact Representation of a Multi-dimensional Combustion Manifold Using Deep Neural Networks. In European Conference on Machine Learning. Wurzburg, Germany, p. 8.

Paper accepted to ECML 2019

, 2019. Training Cooperative Agents for Multi-Agent Reinforcement Learning. In Proc. of the 18th International Conference on Autonomous Agents and Multiagent Systems (AAMAS 2019). Montreal, Canada.

, 2019. Integration of Roadside Camera Images and Weather Data for monitoring Winter Road Surface Conditions. In Canadian Association of Road Safety Professionals CARSP Conference. CARSP Conference, Calgary, Alberta. , p. 4 (Won best paper award!). Available at: http://www.carsp.ca/research/research-papers/research-papers-search/download-info/integration-of-roadside-camera-images-and-weather-data-for-monitoring-winter-road-surface-conditions/. Publisher's Version

, 2017. Application of Probabilistically-Weighted Graphs to Image-Based Diagnosis of Alzheimer's Disease using Diffusion MRI. In SPIE Medical Imaging Conference on Computer-Aided Diagnosis. March 3. Orlando, FL, United States: International Society for Optics and Photonics. Available at: http://dx.doi.org/10.1117/12.2254164. Publisher's Version

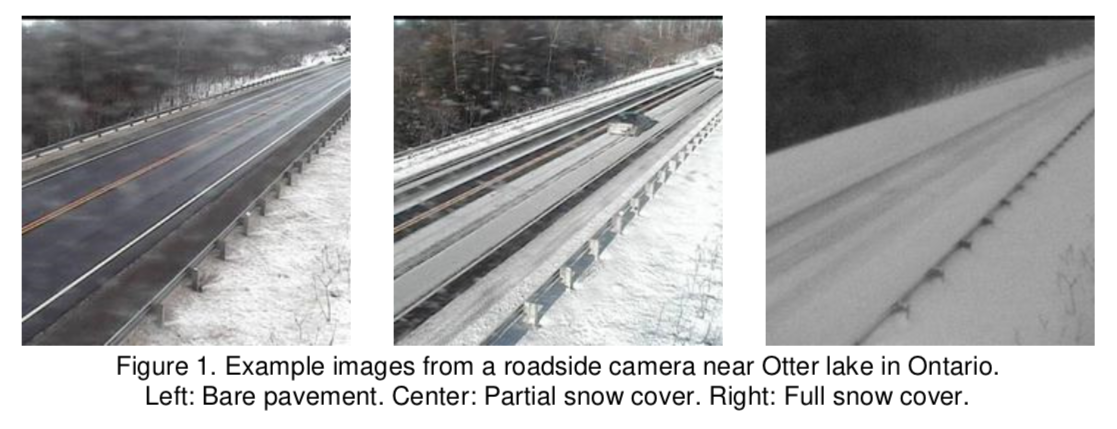

Road maintenance during the Winter season is a safety critical and resource demanding operation. One of its key activities is determining road surface condition (RSC) in order to prioritize roads and allocate cleaning efforts such as plowing or salting. Two conventional approaches for determining RSC are: visual examination of roadside camera images by trained personnel and patrolling the roads to perform on-site inspections. However, with more than 500 cameras collecting images across Ontario, visual examination becomes a resource-intensive activity, difficult to scale especially during periods of snowstorms. This paper presents the results of a study focused on improving the efficiency of road maintenance operations. We use multiple Deep Learning models to automatically determine RSC from roadside camera images and weather variables, extending previous research where similar methods have been used to deal with the problem. The dataset we use was collected during the 2017-2018 Winter season from 40 stations connected to the Ontario Road Weather Information System (RWIS), it includes 14.000 labeled images and 70.000 weather measurements. We train and evaluate the performance of seven state-of-the-art models from the Computer Vision literature, including the recent DenseNet, NASNet, and MobileNet. Also, by integrating observations from weather variables, the models are able to better ascertain RSC under poor visibility conditions.

Road maintenance during the Winter season is a safety critical and resource demanding operation. One of its key activities is determining road surface condition (RSC) in order to prioritize roads and allocate cleaning efforts such as plowing or salting. Two conventional approaches for determining RSC are: visual examination of roadside camera images by trained personnel and patrolling the roads to perform on-site inspections. However, with more than 500 cameras collecting images across Ontario, visual examination becomes a resource-intensive activity, difficult to scale especially during periods of snowstorms. This paper presents the results of a study focused on improving the efficiency of road maintenance operations. We use multiple Deep Learning models to automatically determine RSC from roadside camera images and weather variables, extending previous research where similar methods have been used to deal with the problem. The dataset we use was collected during the 2017-2018 Winter season from 40 stations connected to the Ontario Road Weather Information System (RWIS), it includes 14.000 labeled images and 70.000 weather measurements. We train and evaluate the performance of seven state-of-the-art models from the Computer Vision literature, including the recent DenseNet, NASNet, and MobileNet. Also, by integrating observations from weather variables, the models are able to better ascertain RSC under poor visibility conditions.

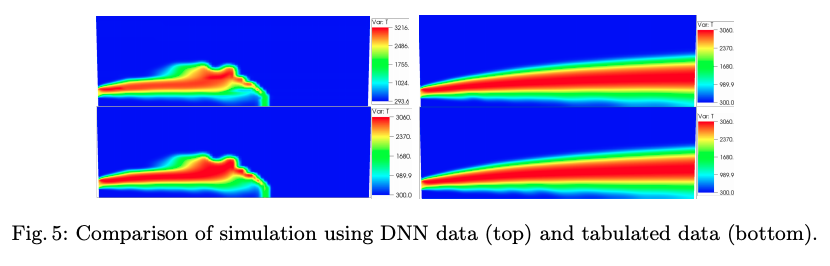

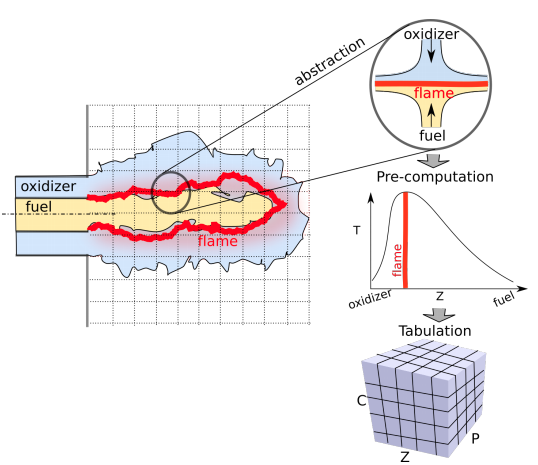

The computational challenges in turbulent combustion simulations stem from the physical complexities and multi-scale nature of the problem which make it intractable to compute scale-resolving simulations. For most engineering applications, the large scale separation between the flame (typically sub-millimeter scale) and the characteristic turbulent flow (typically centimeter or meter scale) allows us to evoke simplifying assumptions--such as done for the flamelet model--to pre-compute all the chemical reactions and map them to a low-order manifold. The resulting manifold is then tabulated and looked-up at run-time. As the physical complexity of combustion simulations increases (including radiation, soot formation, pressure variations etc.) the dimensionality of the resulting manifold grows which impedes an efficient tabulation and look-up. In this paper we present a novel approach to model the multi-dimensional combustion manifold. We approximate the combustion manifold using a neural network function approximator and use it to predict the temperature and composition of the reaction. We present a novel training procedure which is developed to generate a smooth output curve for temperature over the course of a reaction. We then evaluate our work against the current approach of tabulation with linear interpolation in combustion simulations. We also provide an ablation study of our training procedure in the context of over-fitting in our model. The combustion dataset used for the modeling of combustion of H2 and O2 in this work is

The computational challenges in turbulent combustion simulations stem from the physical complexities and multi-scale nature of the problem which make it intractable to compute scale-resolving simulations. For most engineering applications, the large scale separation between the flame (typically sub-millimeter scale) and the characteristic turbulent flow (typically centimeter or meter scale) allows us to evoke simplifying assumptions--such as done for the flamelet model--to pre-compute all the chemical reactions and map them to a low-order manifold. The resulting manifold is then tabulated and looked-up at run-time. As the physical complexity of combustion simulations increases (including radiation, soot formation, pressure variations etc.) the dimensionality of the resulting manifold grows which impedes an efficient tabulation and look-up. In this paper we present a novel approach to model the multi-dimensional combustion manifold. We approximate the combustion manifold using a neural network function approximator and use it to predict the temperature and composition of the reaction. We present a novel training procedure which is developed to generate a smooth output curve for temperature over the course of a reaction. We then evaluate our work against the current approach of tabulation with linear interpolation in combustion simulations. We also provide an ablation study of our training procedure in the context of over-fitting in our model. The combustion dataset used for the modeling of combustion of H2 and O2 in this work is