A new study by researchers from the University of Waterloo and McGill University was featured in TechTalks, a leading artificial intelligence online publication.

The paper focuses on the understudied area of reinforcement learning (RL), a type of machine learning that is inspired by the intelligent behaviour of animals and humans. Early applications of RL have shown an improved efficiency over other types of machine learning, however, its vulnerability to data leaks has yet to be thoroughly explored.

The research was conducted by Systems Design Engineering (SYDE) Professor Alexander Wong and his PhD student Hossein Aboutalebi in partnership with researchers at McGill University and Mila, a collaborative artificial intelligence research institute based in Quebec.

The results of the study show that RL mdoels can effectively be attacked, and this risk significant enough that further research should be conducted before RL is more widely adopted into real-world applications.

“Our efforts in developing the first generation of privacy-preserving deep reinforcement learning algorithms made us realize the fundamental structural differences between classic machine learning (ML) algorithms and reinforcement learning algorithms from the privacy point of view,” the authors said.

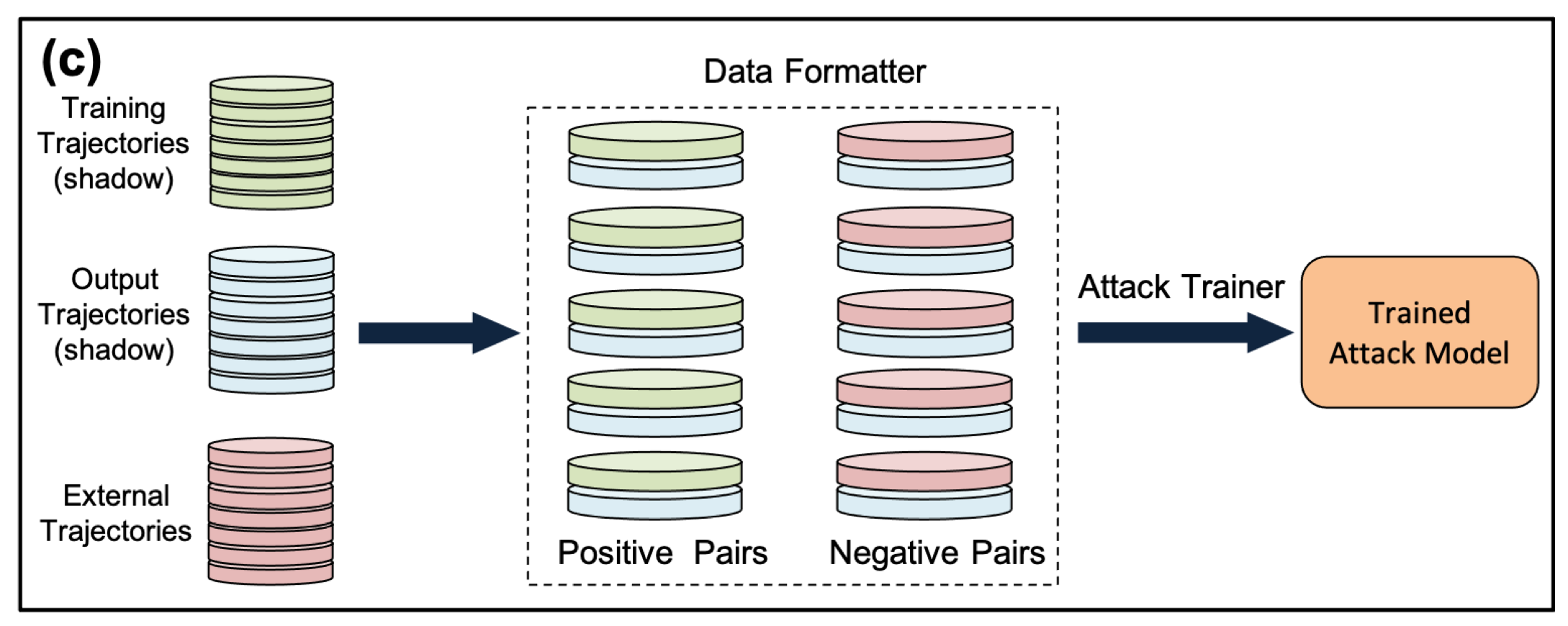

Training the attack classifier: the input and output trajectories are paired together in data formatter to provide positive training pairs for the attack model. Another set of trajectories, which has not been used in training the shadow model, is used with the output trajectories to create negative training pairs for the attack model. The attack model is subsequently trained using the paired trajectories.

Read the full paper: "Where Did You Learn That From? Surprising Effectiveness of Membership Inference Attacks Against Temporally Correlated Data in Deep Reinforcement Learning"