“In the future, I don’t think this is going to be replaced with a quantum computer,” said Rajibul Islam, faculty member at IQC and the Department of Physics and Astronomy, as he pointed towards his desktop computer.

Instead, Islam sees classical computers like the one on his desk working together with quantum computers to solve problems currently impossible with classical computers alone. Thanks to new research, Islam thinks trained neural networks—classical machine learning systems that have ‘learned’ to solve a problem by analysing examples—could play an important role in this hybrid future.

Islam, in collaboration with Roger Melko, IQC affiliate and faculty member in the physics and astronomy department, has demonstrated that a trained neural network can quickly determine the right setup to run a given quantum simulation, a step toward classical-quantum hybrid computers that will tackle the hardest problems of physics and materials science.

Easy in, not so easy out

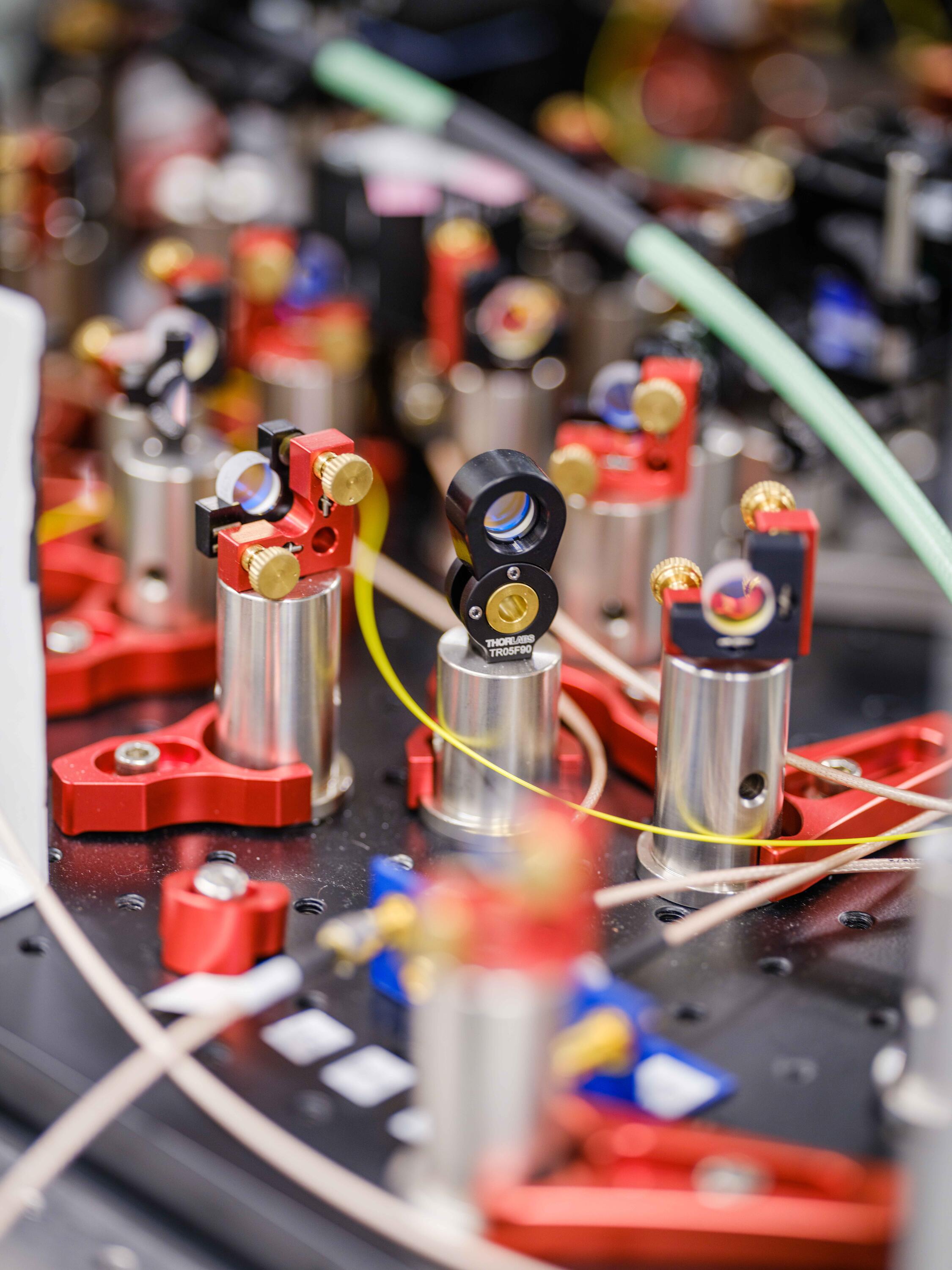

By setting the parameters of their simulator—like laser frequency and intensity—it is simple to solve an equation and determine what interaction is being reproduced in the simulator with those parameters. However, if researchers want to figure out how to set their lasers in order to simulate a specific quantum interaction, like the interaction of electrons in a quantum material, a classical computer must crunch numbers, sometimes for hours for a large system, for each desired interaction.

Once Islam and Melko realized that this problem is really an inverse problem, they had an idea. What if they could train a neural network to recognize a pattern between the experimental parameters and the trapped ion interactions? There was no way to know if it would work without trying. They put two Waterloo undergraduate students, Marina Drygala and Yi Hong Teoh, to work on the problem.

Testing an idea

Yi Hong and Marina found that the neural network, trained with thousands upon thousands of synthesized parameters and resulting interactions, converged on a pattern between them.

Though the training can take hours or days depending on the size of trapped ion system, once trained, a standard desktop computer can return the correct experimental parameters needed to simulate a specific quantum interaction in less than a millisecond. Moreover, since the problem the neural network is solving is an inverse problem, it is trivial to check that the results are accurate once the hard work is done.

“This inverse problem can be applied to so many different problems in quantum computing,” said Islam. “If you look at very complicated ion chips used by companies like Honeywell and IonQ, they have basically the same problem with voltages and the electrical potential applied to their ions.”

Islam is hopeful that more applications for this machine learning approach will surface, and that quantum computers augmented by machine learning will one day tackle the hardest problems in physics and materials science.

“I think the future of quantum computing will combine the strengths of classical computation like machine learning and the strengths of quantum to solve problems that are very hard for either alone. This result is a great step in that direction.”

Machine learning design of a trapped-ion quantum spin simulator was published in Quantum Science and Technology on January 21, 2020.

This project is supported in part by the Canada First Research Excellence Fund (CFREF) through the Transforming Quantum Technologies (TQT) program.