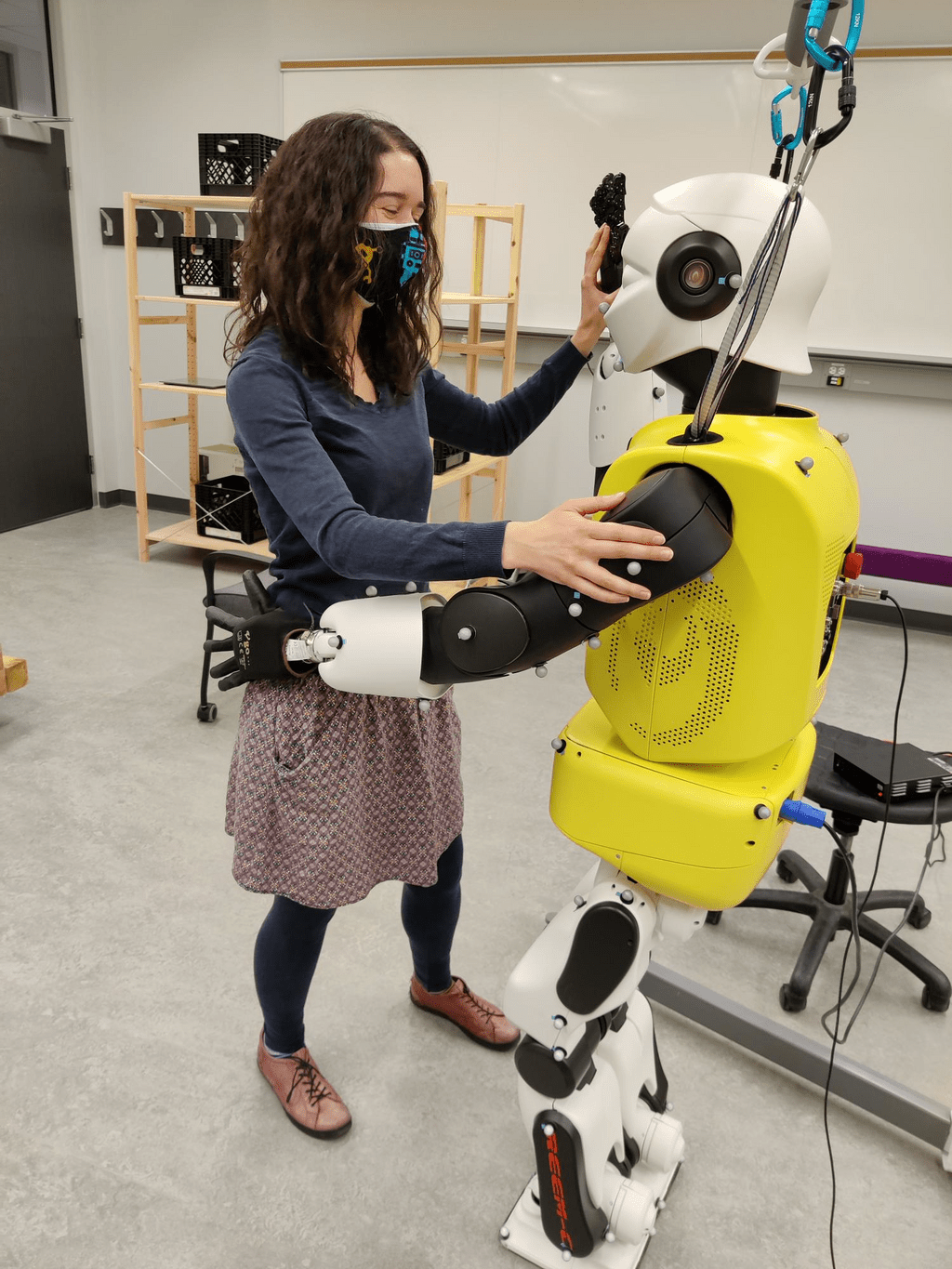

This project focuses on developing compliant control approaches for the position-controlled REEM-C which enable close, direct physical human-robot interactions that are also appropriate for social interactions.

Projects - search

Filter by:

We train robots to solve general tasks using only images.

Wearable computer vision and deep learning are combined for real-time sensing and classification of human walking environments.

This project aims to detect and classify physical interaction between a human and the robot, and the human's intent behind this interaction.

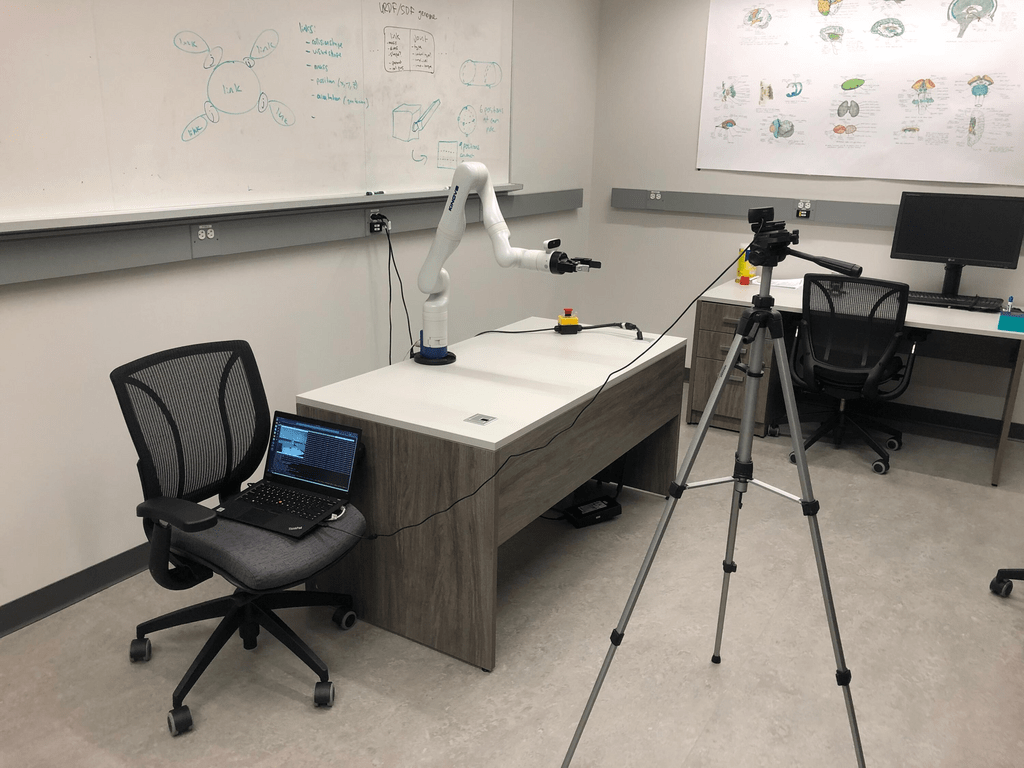

This work aims to ease the implementation and reproducibility of human-robot collaborative assembly user studies.

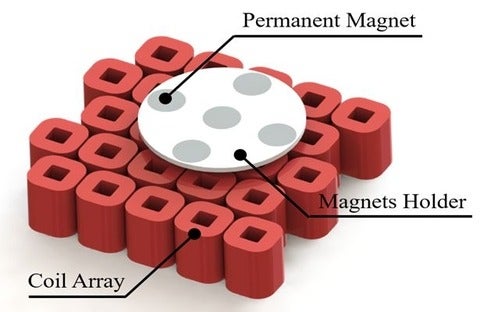

The goal of this project is to levitate a group of robots in 3D space using electromagnetic energy. MagLev (magnetically levitated) robots, providing frictionless motions and precise motion control, have promising potential applications in many fields. Controlling magnetic levitation systems is not an easy task; therefore, designing a robust controller is crucial for accurate manipulations in the 3D space and to allow the robots to reach any desired location smoothly.

This project focuses on deploying a set of autonomous robots to efficiently service tasks that arrive sequentially in an environment over time. Each task is serviced when the robot visits the corresponding task location. Robots can then redeploy while waiting for the next task to arrive. The objective is to redeploy the robots taking into account the expected response time to service tasks that will arrive in the future.

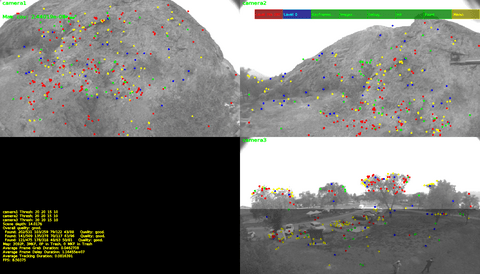

This project brings together several novel components to help solve the problem of multi-camera SLAM with non-overlapping fields of view to generate relative po

Visual navigation algorithms pose many difficult challenges which must be overcome in order to achieve mass deployment.

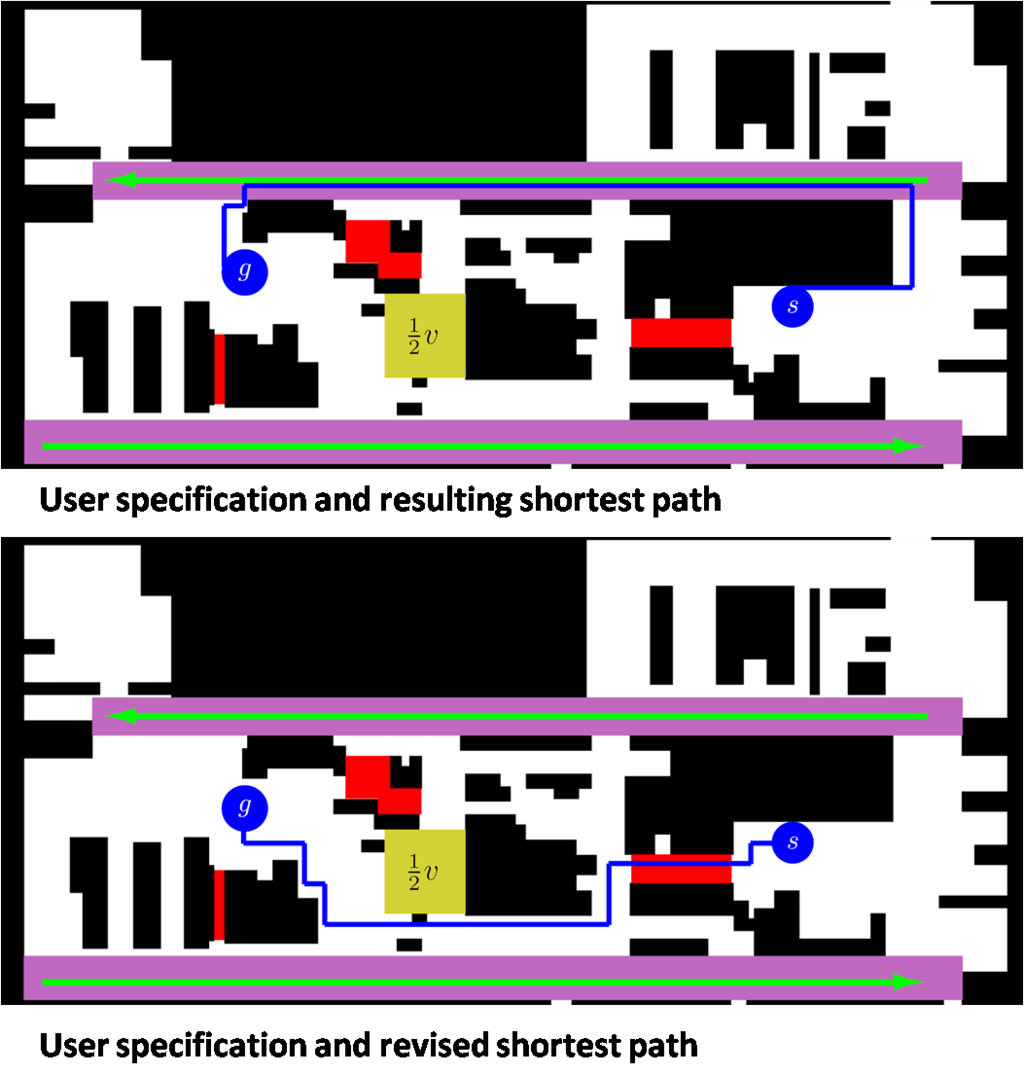

While autonomous robots are finding increasingly widespread application, specifying robot tasks usually requires a high level of expertise. In this work, the focus is on enabling a broader range of users to direct autonomous robots by designing human-robot interfaces that allow non-expert users to set up complex task specifications. To achieve this, we investigate how user preferences can be learned through human-robot interaction (HRI).

The goal of this work is to develop methods and tools which will enable reliable dynamic balance and gait for bipedal robots. Currently, the focus of this project is on the development and application of methods for quantifying and measuring various kinematic and dynamic properties of the robot which are critical to dynamic bipedal gait and balance.

This work presents a path following controller for a quadrotor vehicle.

The main motivation for this project is to improve the model of dynamical systems, beyond the physics-based models, by incorporating measurements of the model o