The key to people trusting and co-operating with artificially intelligent agents lies in their ability to display human-like emotions, according to a new study by Postdoctoral Fellow Moojan Ghafurian, Master’s candidate Neil Budnarain and Professor Jesse Hoey at the Cheriton School of Computer Science.

The World Economic Forum expects that more machines will become part of the workforce as technological breakthrough rapidly shift. Based on the findings of this trio of computer science researchers, developing the humanness of AI machines may improve people’s acceptance of them in the workplace.

Moojan Ghafurian is a postdoctoral fellow in the David R. Cheriton School of Computer Science and a member of the Computational Health Informatics Lab (CHIL). Her research interests span the areas of human-computer interaction, artificial intelligence and cognitive science. She holds the inaugural Wes Graham Postdoctoral Fellowship.

“The capability of showing emotions is important for AI agents, especially if we want users to trust the agents and co-operate with them,” said Moojan Ghafurian, lead author of the study. “Improving humanness of AI agents could enhance society’s perception of assistive technologies which going forward will improve people’s lives.”

In undertaking the study, Ghafurian and her co-authors Professor Hoey and Neil Budnarain used a classic game called “The Prisoner’s Dilemma.”

The original version of the game sees two prisoners isolated from one another and then questioned by police for a crime they committed together. If one of them snitches and the other doesn’t, the non-betrayer gets three years, and the snitch walks. This works both ways. If both snitch, they both get two years. If neither one snitches, they each get only one year on a lesser charge.

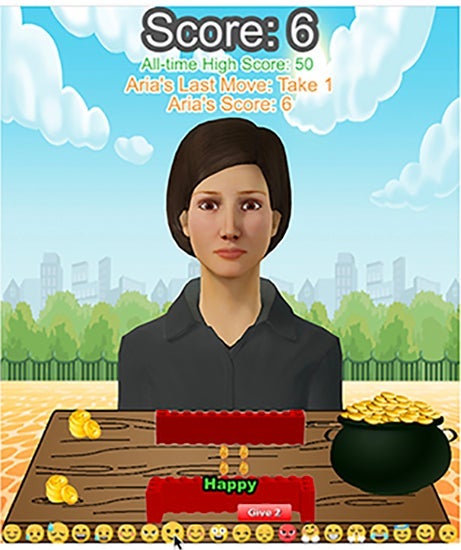

Waterloo’s study substituted one of the human ‘prisoners’ with an AI virtual human and allowed them to interpret the other’s emotions. Instead of prison sentences, they used gold, so the point was to get the highest score possible, as opposed to the lowest. The virtual human was developed by the Interactive Assistance Lab at the University of Colorado, Boulder, in the context of a program to help people with cognitive disabilities, particularly relating to the perception of emotional signals.

An example of the game’s setting. The two-coin piles on the left show the number of coins that the participant and Aria, the virtual agent, have earned so far in the game. Currently, the player has chosen to give two coins and look “happy” for the next round. Aria’s current emotion is “sorry”.

The researchers used three different virtual agents that had the same strategy but reflected different emotions. One of them didn’t show any emotion, one of them showed appropriate emotions, and the other generated random emotions.

The 117 participants were then randomly paired with an agent and asked to rate it based on how humanlike they perceived it. They then observed the participants to see how they interacted with the agents, for example, how many times they co-operated.

On average, people co-operated 20 out of 25 times with the agents that showed human-like emotion. For the one showing random emotions, they co-operated 16 times out of 25 and for the one showing no emotion co-operation occurred 17 out of 25 times.

“Based on our findings it’s better to show no emotion rather than random emotions, as the latter would make that agent look less rational and immature,” said Moojan Ghafurian. “But showing proper emotions can significantly improve the perception of humanness and how much people enjoy interacting with the technology.”

The researchers want to eventually design assistive technology for people with dementia that they will feel comfortable using. This research is an important step in that direction.

The study titled, Role of Emotions in Perception of Humanness of Virtual Agents, authored by Ghafurian, Hoey, and Budnarain, was presented recently at the 18th International Conference on Autonomous Agents and Multiagent Systems (AAMAS).