A trio of Cheriton researchers have developed an AI-powered tool that can accelerate software debugging via test automation.

One of the first steps in software debugging is bug reproduction, where a programmer will replicate a bug to understand and decipher its behaviour. They can convert their replication instructions into a bug reproducible test (BRT), a test that fails if the bug is present and passes once it’s gone.

“As a software engineer, you must be able to reproduce any problems that your customer is facing before you attempt to solve them,” explains Noble Saji Mathews (MMath ’25), a computer science alum. “Let’s say they clicked on a button and the entire program crashed. So, if I make changes to the software and run a BRT, in this case, clicking on the same button as my customer and seeing if the program will crash, I can confirm if the bug has been fixed.”

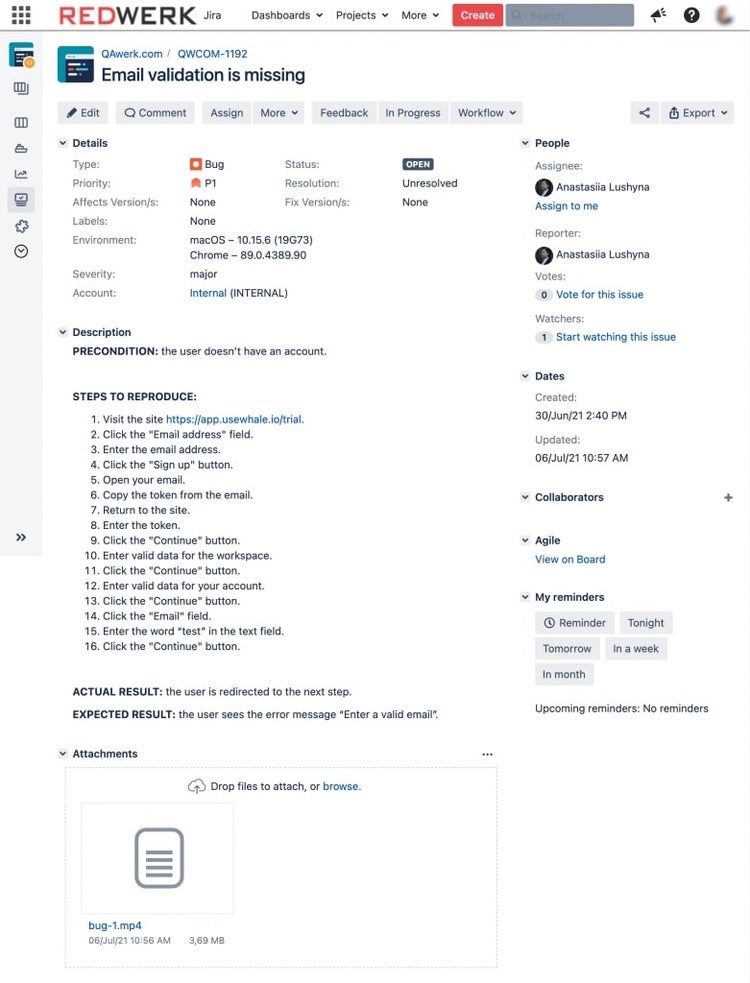

An example of a BRT, where a programmer explains how to solve an error message for an email sign-up. BRTs are key to diagnosing and debugging software bugs (Source: QAwerk)

However, BRTs are usually written when the bug is repaired to confirm the fix and prevent recurrence. Instead, programmers waste hours manually reproducing bugs, which further delays software fixing. If they had a BRT from the get-go, they could reserve this time for problem-solving.

A trio of Waterloo computer scientists (L to R: Professor Meiyappan Nagappan, Noble Saji Mathews and Lara Khatib) created an AI-powered tool that can automate bug reproduction tests

Fortunately, Lara Khatib, a research associate at the Cheriton School of Computer Science, teamed up with Professor Meiyappan Nagappan and Mathews, his former graduate student, to create AssertFlip, a tool that can automate BRTs.

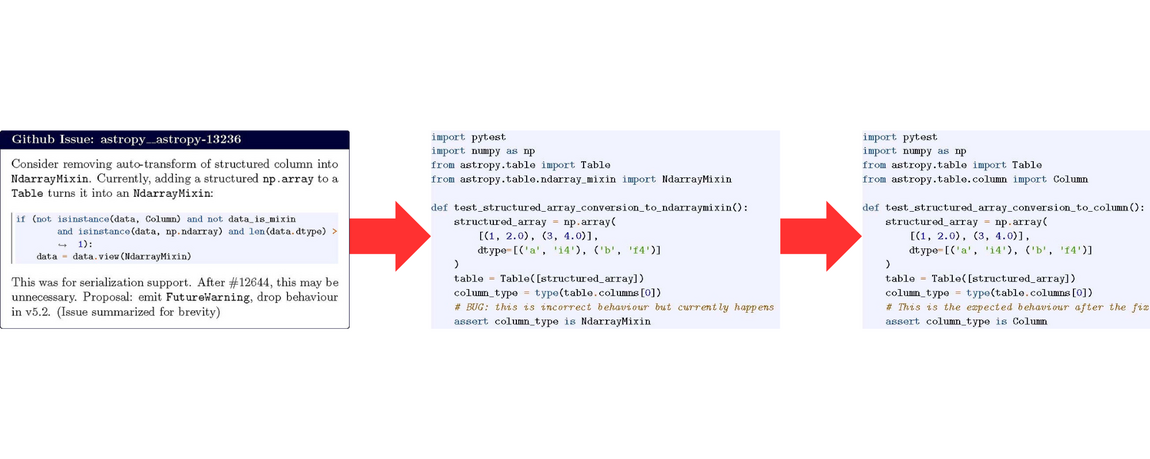

“Instead of asking the LLM to write a test that fails right away, we first instruct it to generate a passing test on the buggy version that captures the behaviour described in the issue report,” explains Khatib. “Once we have a working test, we flip it. We modify the conditions so that the test now reflects the correct behaviour.”

As a result, AssertFlip can direct the developers to the buggy logic that needs to be fixed, making troubleshooting faster and more streamlined. Once the user repairs the bug, they can also use the generated BRT to validate their fix.

If the generated test fails to run, the tool initiates an automated refinement loop that uses execution feedback to iteratively correct the test code, which overcomes past issues with LLM-based BRT tools. Oftentimes, LLM-generated BRTs can fail due to issues unrelated to the bug, such as setup mistakes, AI-induced hallucinations or bad imports— making bug-spotting and fixing even harder.

An overview of AssertFlip. In the first photo, a bug report describes incorrect behaviour, in this case, adding a structured array to an Astropy table automatically changes the data type. AssertFlip will then generate a test that passes on the buggy code. Finally, it will reverse the passing test’s conditions to create a bug-revealing test.

This tool is an amalgamation of the researchers’ previous software engineering research. They hypothesize that LLMs are better at writing tests that “pass” rather than “fail on purpose,” serving as the basis for AssertFlip.

“If you let an LLM go without any checks and balances in place, it would agree on whatever you’re saying,” explains Matthews.

The researchers also hope that their design can inspire software engineers to create “trustworthy” AI systems.

“If you ask ChatGPT something, it’ll never say that it doesn’t know,” Professor Nagappan says. “It’ll always give you an answer, sometimes the wrong one. But in software engineering, a wrong answer can be very costly. What’s inherent to our technique is that our tool doesn't always give an answer. If AssertFlip isn’t confident it has produced a good result, it simply won't give one. We think that ability to abstain can be leveraged in the design of AI tools to make them more trustworthy.”

Notably, AssertFlip outperforms all open-sourced systems tools on SWT-Bench, a leaderboard that ranks BRT tools. It achieved a fail-to-pass success rate of 43.6 per cent, while the closest open-sourced tool came at 27.7 per cent.

The researchers have open-sourced AssertFlip for the software engineering community.