Santhoshini Velusamy joined the Cheriton School of Computer Science as an Assistant Professor in December 2025. Before coming to Waterloo, she was a Research Assistant Professor at Toyota Technological Institute at Chicago, where her research was supported by a National Science Foundation grant. Previously, she was a research fellow in the Sublinear Algorithms program at Simons Institute for the Theory of Computing.

Professor Velusamy earned her PhD in Computer Science from Harvard University in 2023, under the supervision of Professor Madhu Sudan. She is the recipient of a Google PhD Fellowship and a National Science Foundation Computer and Information Science and Engineering Research Initiation Initiative award.

Her research interests lie in theoretical computer science, specifically the design and analysis of algorithms in modern computational settings such as streaming, as well as proving hardness of approximation. She is also interested in algorithmic game theory.

What follows is a lightly edited transcript of a conversation with Professor Velusamy, where she discusses her research, advice for aspiring computer scientists, and what excites her about joining the Cheriton School of Computer Science.

Tell us a bit about your research.

Most of my research focuses on developing algorithms for settings involving massive datasets. Fifty years ago, if I were trying to solve a matching problem, the graph might have had 50 nodes. Back then, the entire graph could be stored in RAM or cache memory and accessed whenever needed.

Today, however, graphs can contain tens of millions of nodes. At that scale, storing the entire graph in memory and accessing it freely is no longer practical. Even simply accessing the data can incur significant computational costs.

My research centres on the question of how to solve optimization problems when the input is too large to be stored in RAM. One productive setting for studying this challenge is the streaming model, in which we assume the algorithm has only a small amount of memory and observes the data sequentially, one element at a time.

There are two ways to think about this. In the first, data arrives as a stream and the algorithm must process it on the fly with limited memory. In the second, data may reside in a large centralized system where random access is expensive but sequential access is comparatively cheap. Streaming algorithms remain useful in both cases because they process data sequentially and thereby reduce access costs.

My research focuses on developing algorithms and understanding the limits of what these kinds of space-constrained algorithms can do when solving fundamental optimization problems. This is theoretical work, but it has some practical applications.

Say I want to implement a classic algorithm on data stored on a disk. That algorithm will typically make multiple random accesses, and when we analyze running time, we usually treat each access as taking one unit of time. Streaming algorithms can be used to speed up such algorithms by replacing random access with sequential access — and in doing so, achieve much faster run times.

I’m also interested in algorithmic game theory, an area that tends to be more applied. For example, I've worked on designing auctions that are trustworthy for bidders by design while still achieving at least a constant fraction of the revenue of an optimal auction. More broadly, I’m drawn to online algorithms, auction theory, fair allocation of items, and related questions.

What do you see as your most significant contribution?

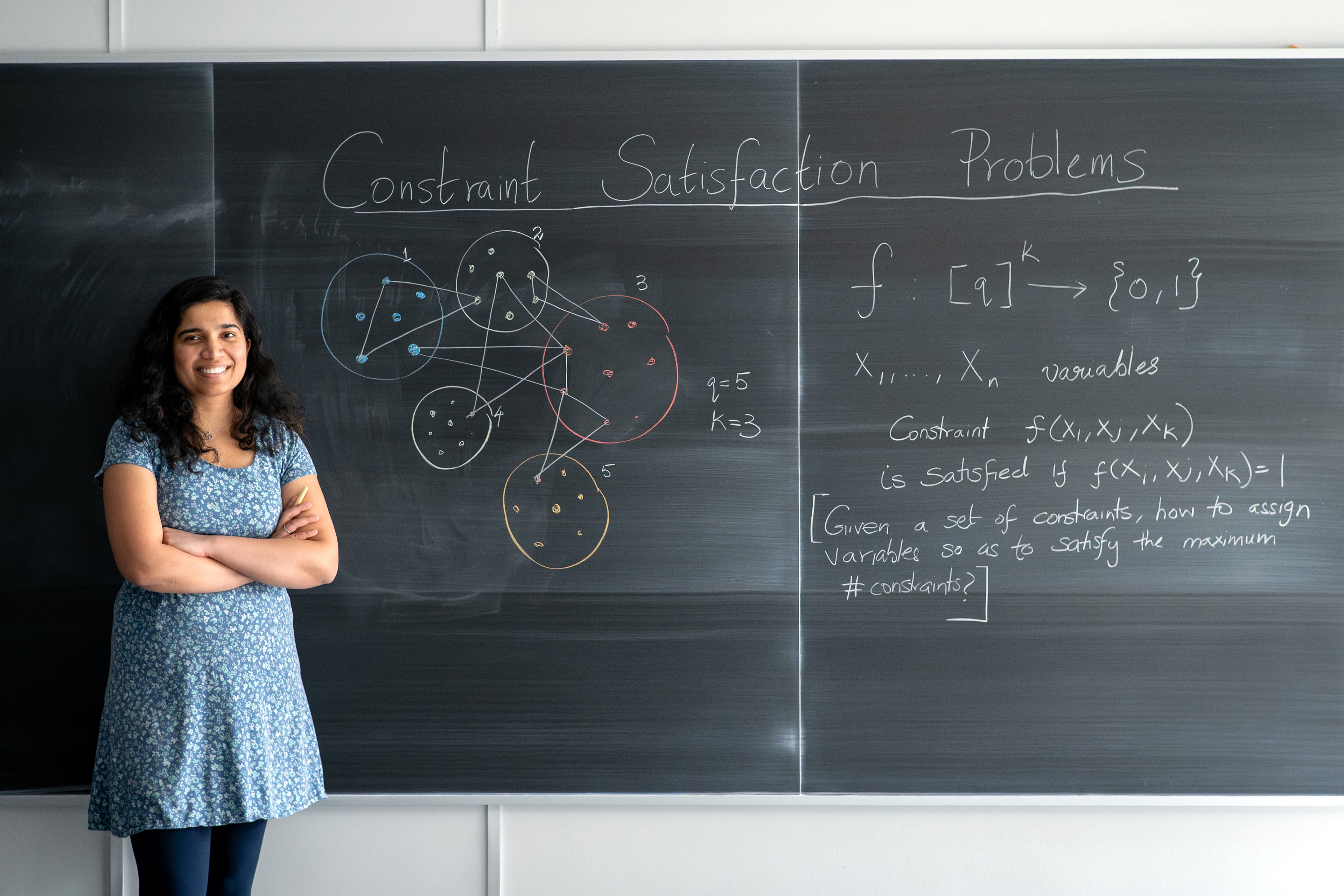

One of my most significant contributions has been helping to establish a new area within streaming algorithms through the study of constraint satisfaction problems. Broadly speaking, these problems involve a set of variables and constraints on those variables, and the goal is to find an assignment that satisfies as many constraints as possible.

This arises in many domains — in scheduling, for example, where hard and soft conflicts are common. Or, in an academic context, consider course allocation where students have preferences. Those preferences are the constraints, and the goal is to satisfy as many of them as possible.

Constraint satisfaction problems are fundamental and appear everywhere in computer science. Given how fundamental they are and the scale of the problems we encounter today, it’s natural to ask whether you can develop streaming algorithms to solve them.

This question was first posed roughly two decades ago. Some years later, a group of researchers studied Max-Cut, a central problem within the broader class of constraint satisfaction problems. I call it central because even in the classical setting — where polynomial-time algorithms were developed — it was Max-Cut that first inspired our most sophisticated techniques, which were then generalized to the whole class. It was the natural starting point.

That paper surprisingly established that no streaming algorithm can achieve anything meaningful for Max-Cut: there is not even a single nontrivial approximation. The field then went quiet on the assumption that if Max-Cut was hard, everything else probably was too.

This work began when I was an undergraduate intern at Carnegie Mellon University. The professor advising me suggested: “We know Max-Cut is hard — but maybe you can prove that a related problem, Max-Directed Cut, is hard too. Why don’t you think about it?”

I wasn’t able to prove hardness, but I found an algorithm that solves Max-Directed Cut — the first result of its kind in this area. During my PhD, my advisor, several co-authors, and I developed the theory further, showing that the full class of constraint satisfaction problems admits a complete characterization of what approximate optimization is achievable in the streaming model.

Many open questions remain, but this work has grown into its own subarea, developed across a series of papers.

What challenges in the design and analysis of algorithms do you find exciting to tackle?

In my research, I aim to develop techniques for broad classes of problems considered together rather than problems in isolation. Constraint satisfaction problems are one example. Working at this level of generality is rewarding because a unified theory often reveals something intrinsic about the structure of the problems themselves.

Another line of research I’m pursuing with a Waterloo graduate student involves learning Boolean functions under limited memory. Specifically, if we are learning a Boolean function from random samples, how much memory is required for a given number of samples? We have obtained a complete characterization for all symmetric Boolean functions and are now working toward more general cases.

We have observed that these bounds naturally connect to intrinsic properties of the function itself. For example, there is a notion called the sensitivity of a Boolean function. Could the trade-off between memory and the number of samples be intrinsically tied to that sensitivity?

Questions like these excite me — and pursuing them tends to reveal something deep about the nature of these problems.

What advice do you have for students interested in pursuing research in your area?

I work in theoretical computer science, which sits at the intersection of mathematics and computer science. In many ways, my work is closer to mathematics than to engineering, so a strong mathematical foundation is essential. We expect both depth and breadth, and my advice to students is simply to take as many math and theoretical computer science courses as you can.

One of Waterloo’s great strengths is its Faculty of Mathematics, which offers courses across several departments that complement computer science. The breadth of courses here — especially in theory — exceeds what I encountered as a PhD student at Harvard. I encourage students to take full advantage of that opportunity.

My other piece of advice is to pursue an undergraduate research assistantship. Academia has become highly competitive — noticeably more so than when I was a student. Getting research experience as an undergraduate and publishing your results makes a real difference. I also encourage students to apply for undergraduate research fellowships, which offer the chance to work directly with a supervisor on a focused problem. I’ve already seen this at Waterloo. A number of students have reached out saying, “I’m really interested in your research — can we meet and explore possibilities?” It’s remarkable. I saw this kind of initiative occasionally at Harvard; it’s rare elsewhere, but it seems to be the norm at Waterloo. That drive — students in their first year seeking out faculty to do research with — is genuinely inspiring.

Do you see opportunities for collaborative research at the Cheriton School of Computer Science?

I see strong opportunities for collaboration within the Algorithms and Complexity group at the School of Computer Science, and more broadly with faculty in the Department of Combinatorics and Optimization, whose work overlaps significantly with mine.

The opportunity for collaborative research strongly influenced my decision to come to Waterloo. If I count all the faculty here whose research intersects with mine, it’s easily 30 or more researchers across the Faculty of Mathematics.

I’ve had several conversations with my colleagues and I’m excited about what the future holds and what we might accomplish together.

For instance, Professor Kate Larson at the School of Computer Science also works on algorithmic game theory, and she connected me with a colleague in the economics department. We’re hoping to start a reading group together exploring our shared interests. In game theory, the path from theory to application is relatively direct, which means this research can have immediate real-world impact.

Who has inspired you in your career?

I’d like to mention two people: my mother and my PhD advisor.

I’ll start with my mother. I grew up in a deeply academic environment. My mom is a professor of computer science working in applied areas — computer networks and digital forensics. We lived in a gated campus community centred on the university, surrounded by faculty and students. All my neighbours were academics.

From a young age, I was encouraged to teach. When school let out, the bus didn’t drop me at home — it dropped me at my mother’s department. I would go to her office, and she would ask me to teach her what I had learned that day.

It wasn’t simply a memory check. Teaching helped clarify and deepen my own understanding. Teaching became woven into my life from an early age.

Former students — some from years or even decades earlier — would return to thank my mother for the difference she had made in their lives. Witnessing that firsthand moved me deeply. It is what made me want to teach.

Teaching is a learning experience for the teacher as well. To convey a concept clearly to someone at any stage of understanding, you need to know the material deeply yourself. For me, there was never a choice between industry and academia — I always knew I wanted to be an academic, and my mother was instrumental in setting me on that path.

My PhD advisor has also been a profound influence, particularly in how he works with students. He may be the most prolific mentor in my field in terms of the number of students he has advised — roughly 90 percent of whom have gone on to academic positions, many at leading universities. One of his former students is now director of the Simons Institute for the Theory of Computing.

Much of this success can be attributed to his advising style. He never pressures students to work on a particular problem; instead, he follows their interests. All of his work with me was on streaming algorithms, while other students pursued differential privacy, circuit complexity, or whatever they were deeply interested in. He is fundamentally an advisor who adapts to the student, not the other way around. That approach is his hallmark, and it is something I hope to carry forward with my own students.

When students are working on something they genuinely care about, they invest more deeply. I am inspired by that philosophy and aspire to be the same kind of advisor. In addition to directing students toward a problem of my choosing, I want to ask what interests them — and find a space where we are both passionate and can explore it together. Most of the students I have mentored through internships have benefited from this approach, and many of those experiences have led to strong publications.

What do you do in your spare time?

I have a three-year-old daughter, so most of my spare time revolves around her.

I’m also something of an escape room enthusiast. My husband and I have been working our way through the ones in the area.

Before my daughter arrived, I used to read fantasy novels, though finding time for that has become difficult. For a creative outlet, I now take a weekly acrylic painting class. I won’t claim to be a talented artist, but it’s wonderfully relaxing.