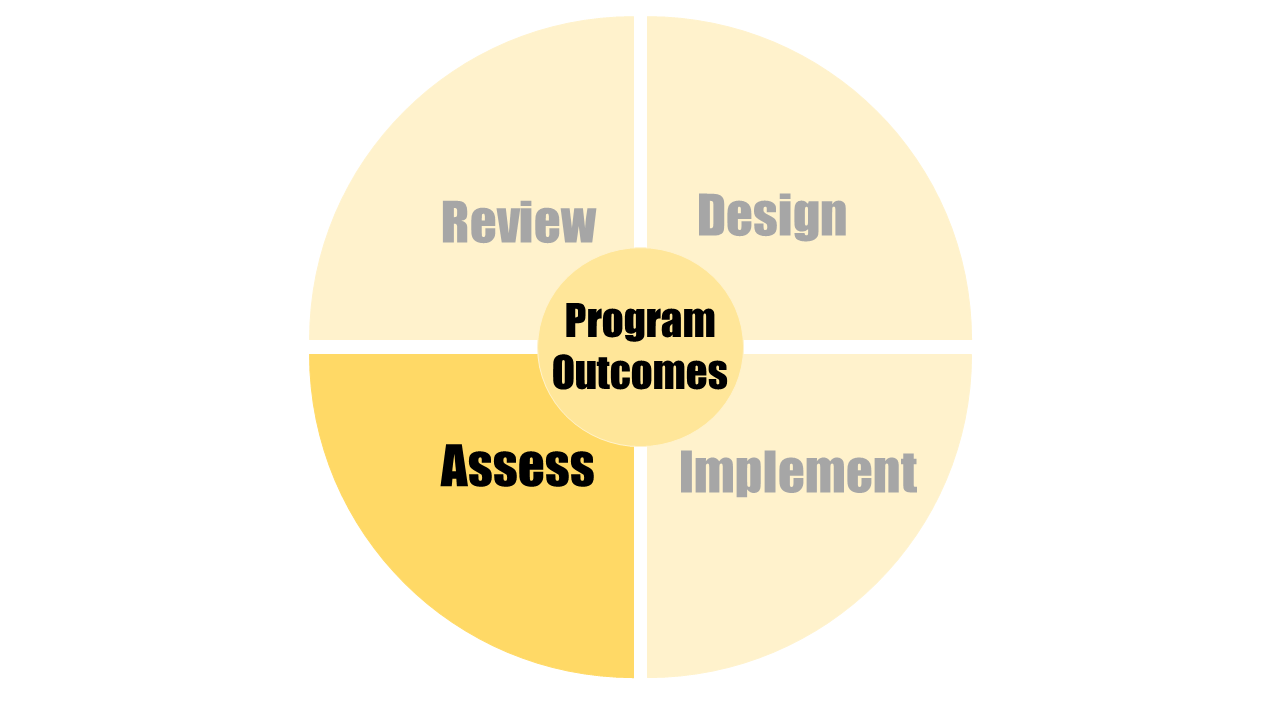

As part of the curriculum design and renewal process, two types of evaluation might occur.

The first type is an assessment of theimpact of the curricular changethat has been implemented.

The second type relates to the external assessmentof an entire curriculum (i.e., through formal program review and accreditation), which is explored in the Program Review and Accreditation section.